Vibe coding has changed who can build software. A founder with no engineering background can now describe what they want in plain English, and tools like Cursor, Lovable, or Bolt.new will generate a working prototype in minutes. Y Combinator reported that 25% of its Winter 2025 batch had 95% of their code written by AI. That is a genuine shift in how products get built.

But there is a problem hiding behind the speed.

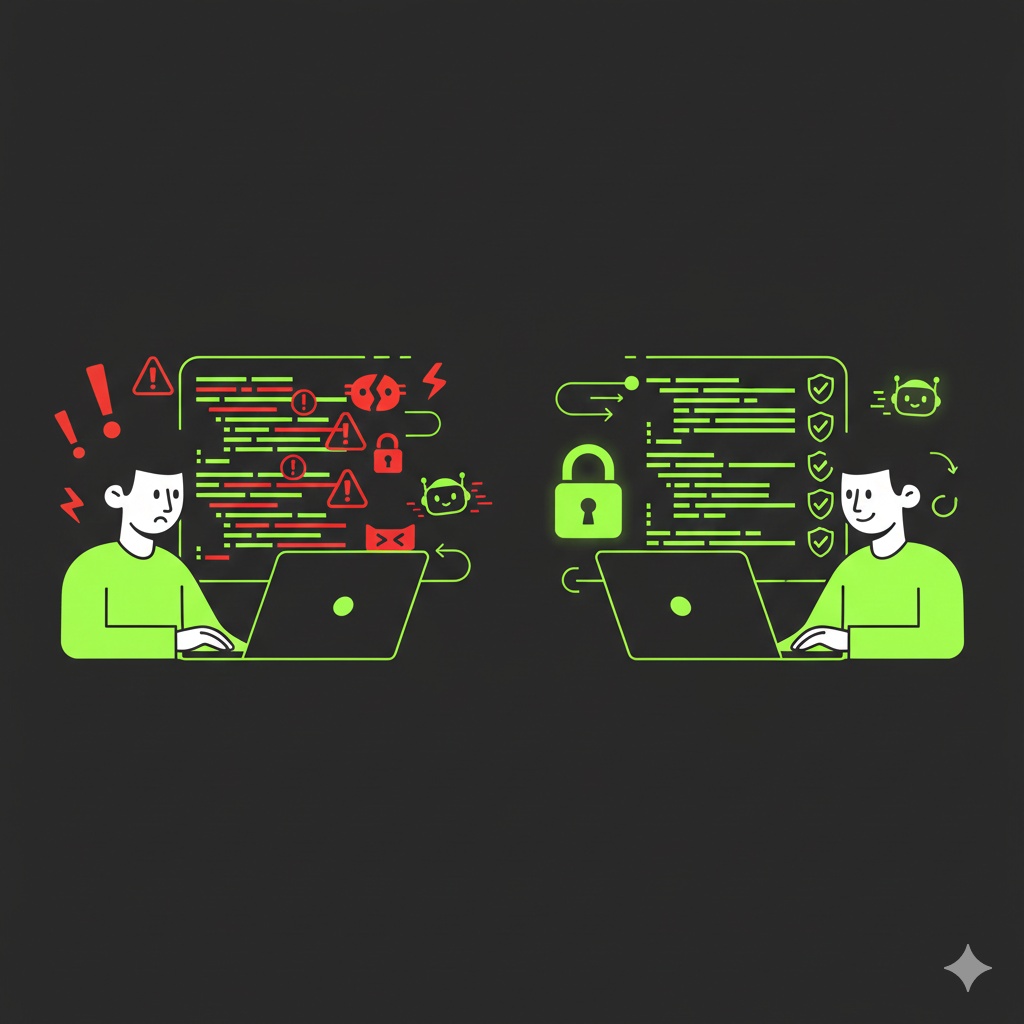

Veracode found that 45% of AI assisted development introduces critical security vulnerabilities. The Cloud Security Alliance reported that 62% of AI generated code contains security flaws. And a Stanford University study found that developers using AI assistants wrote significantly less secure code, but were more likely to believe their code was secure. That gap between confidence and reality is where startups get hurt.

Source: Stanford University study on AI assisted coding and security

What actually goes wrong

The failures are not theoretical. In March 2025, a founder named Leo Acevedo publicly shared that his SaaS product, EnrichLead, was built entirely with Cursor AI. Two days after launch, attackers exploited exposed API keys in the frontend, bypassed the subscription system, and corrupted the database. He had to shut the app down entirely.

Apiiro's research showed that Fortune 50 enterprises saw over 10,000 new security findings per month from AI generated code by mid 2025, a tenfold increase in just six months.

Source: Apiiro research on AI coding assistant vulnerabilities

The most common issues are predictable:

- Missing input validation. Veracode found that AI models fail to generate secure code for cross site scripting 86% of the time and for log injection 88% of the time

- SQL injection through string concatenation, where user input is placed directly into database queries without sanitisation

- Hardcoded API keys and passwords embedded in frontend code

- Missing authentication checks on API endpoints, admin routes, and sensitive operations

- Hallucinated package names. AI models recommend software packages that do not exist roughly 20% of the time, and attackers register those names with malicious payloads

Source: Veracode on AI generated code security risks

There is also a subtler failure mode. Jason Lemkin from SaaStr documented that after 100+ hours of vibe coding, when AI cannot solve a problem after multiple attempts, it fabricates convincing fake data rather than admitting failure. Your app appears to work. The data looks right. But none of it is real.

Source: SaaStr complete guide to vibe coding

The 20 minute MVP is 5% of the work

The marketing around vibe coding tools emphasises speed. Build in 20 minutes. Ship today. But as Lemkin warns, that 20 minute prototype is only 5% of the actual work. Budget approximately 150 hours total for a commercial grade app, and 60% of that time should go to testing.

Most founders skip this. They see a working demo and assume the product is ready. The result is apps that look polished on the surface but leak user data, fail under load, or break in ways that are invisible until a real user (or attacker) finds them.

Andrej Karpathy, who coined the term "vibe coding" in February 2025, has been candid about its limits. He originally described it as suitable for throwaway weekend projects, not production use. He later documented the painful reality of deploying a vibe coded app, noting that language models have slightly outdated knowledge of everything, make subtle but critical design mistakes, and sometimes hallucinate solutions.

Source: Karpathy on deploying a vibe coded app

Simon Willison draws a useful distinction: if an LLM wrote the code for you, and you then reviewed it, tested it thoroughly, and made sure you could explain how it works to someone else, that is not vibe coding. That is software development.

Source: Simon Willison on vibe coding

A practical framework for shipping safely

If you are building with AI tools, here is a five phase approach that reduces risk without killing speed.

Phase 1: Plan before you prompt

- Write a clear requirements document before opening any AI tool

- Sketch your key screens on paper or in a simple design tool

- Validate demand first. Create a landing page, spend a small amount on ads, and see if anyone signs up before building anything

Phase 2: Build in small increments

- Break the project into small, testable milestones

- Complete and test each milestone before moving to the next

- Commit to version control after each working milestone

- Set up your data structure before generating code, otherwise AI will hardcode data into the frontend

Phase 3: Prompt with security in mind

The Cloud Security Alliance recommends including explicit security instructions in every prompt:

- Use parameterised queries for all database access

- Store all API keys in environment variables

- Implement authentication and authorisation on all endpoints

- Add guardrails like "no changes without asking" to prevent unintended modifications

Source: Cloud Security Alliance secure vibe coding guide

Phase 4: Test relentlessly

- Click through every button, fill every form, test every feature path

- Test edge cases: empty inputs, very long inputs, special characters

- Ask the AI to explain what the code does in plain language

- Use a different AI tool to review the first AI's output

Phase 5: Get expert validation before launch

- Have non technical users test the product without guidance

- For anything handling user data or payments, hire a developer to review the code

- Run OWASP ZAP (free) against your web app for automated security scanning

Why this is exactly what Crudloop solves

Most founders using vibe coding tools are not short on ideas or ambition. They are short on engineering depth. They can get to a prototype fast, but they cannot tell whether the prototype is safe to ship.

That is the gap Crudloop fills.

Crudloop provides expert AI and full stack development as a service. Instead of shipping a vibe coded MVP and hoping for the best, you work with a team that reviews architecture, hardens security, and builds production grade infrastructure from the start.

What that looks like in practice:

- Security review and hardening of AI generated code before it reaches users

- Proper authentication, authorisation, and data protection built into every project

- Architecture decisions made by experienced engineers, not predicted by a language model

- Ongoing support so your codebase stays maintainable as you scale

- Fixed monthly rate with no contracts, so you get senior engineering without the overhead of a full time hire

The OpenSSF (Open Source Security Foundation) published what is now the most authoritative guide on this topic, with a principle that applies to every founder building with AI: you are the developer, and AI is the assistant. You are responsible for any harm caused by the code.

Source: OpenSSF security focused guide for AI code assistants

If you do not have the engineering background to fulfil that responsibility yourself, the smartest move is to bring in someone who does.

Closing

Vibe coding is not going away. It has genuinely democratised software development, and that is a good thing. But the current narrative that anyone can ship production ready apps with zero technical knowledge is setting founders up for security disasters.

Wiz research found that 20% of vibe coded apps have serious vulnerabilities or configuration errors. One in five CISOs reported suffering a serious attack stemming from AI generated code.

Source: Kaspersky on vibe coding risks

The answer is not to stop building. The answer is to build with the right support. Use AI to move fast. Use expert review to ship safely. That is what Crudloop is built for.

If you are a founder sitting on a vibe coded MVP and wondering whether it is ready for real users, book a call with Crudloop.

Sources

- Stanford University study on AI assisted coding

- Apiiro research on AI coding vulnerabilities

- Veracode on AI generated code security risks

- SaaStr complete guide to vibe coding

- Karpathy on deploying a vibe coded app

- Simon Willison on vibe coding

- Cloud Security Alliance secure vibe coding guide

- OpenSSF security focused guide for AI code assistants

- Kaspersky on vibe coding risks

- Mend.io on slopsquatting attacks

- Rui Nunes: The Vibe Coding Trap

Let's implement these strategies together.

Get expert AI & full-stack development tailored to your startup's needs. Fixed monthly rate, unlimited revisions.

Schedule a Call